Over the years people have come to expect search engines to automatically detect intent and provide great search results for text queries typed into a single search box. Now Bing takes the first step to achieve the same for images.

The Bing Team sets out to connect your camera to a deep search experience.

We started several years ago by introducing ‘Search By Image' capability. This allowed a user to specify an entire image to be used as a search query. But what if you only want to search for a certain object you saw in an internet image – or one you photographed? So far you'd have to jump through hoops to accomplish this - but not anymore. In this post we will share some recent work we’ve done to solve this challenge and some of the technical wizardry that made it possible.

Introducing Bing Visual Search

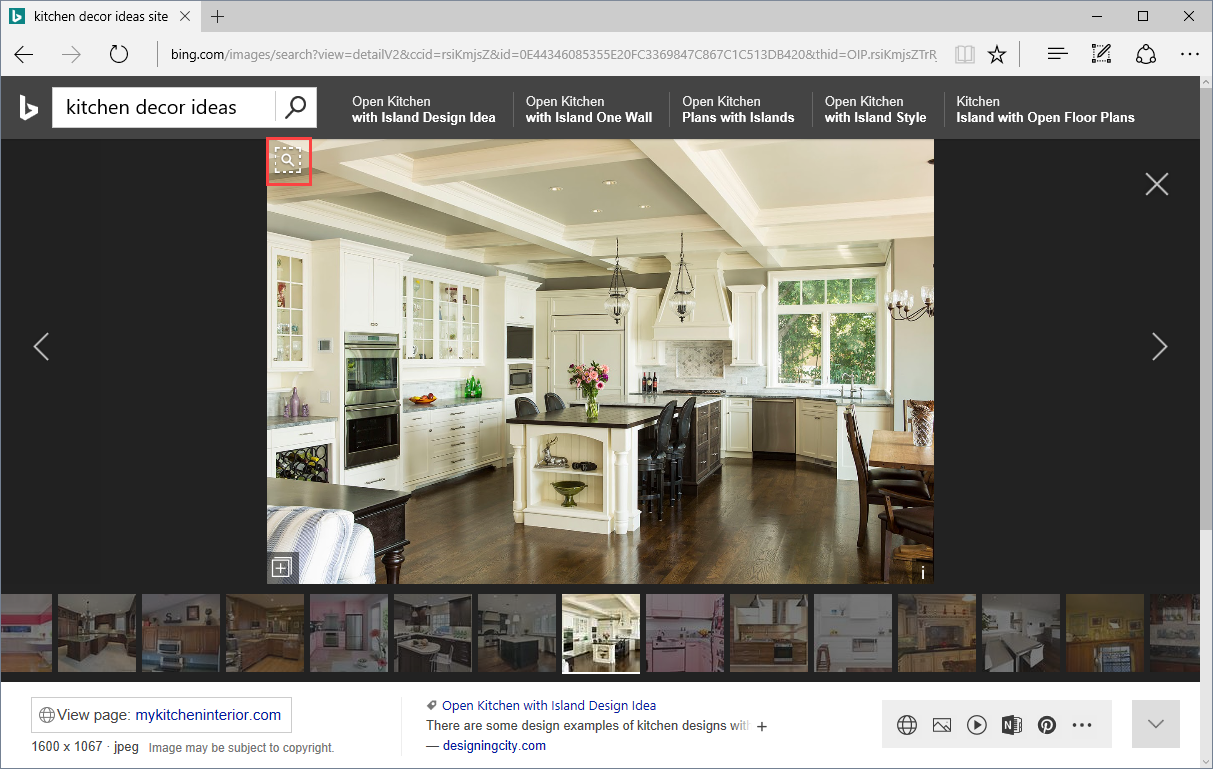

Let's say you are looking for kitchen decoration inspiration, and an image attracted your attention. You click on a thumbnail result to get to the ‘Detail View’. You really like the overall décor, but you are particularly interested in that nice-looking chandelier. Would it be possible to see where you can get one just like that? With Bing Visual Search, now you can!

In the Detail View, you will now see a magnifying glass symbol in the top left of the image. It is called the visual search button.

Clicking the visual search button displays a visual search box on the image. You can click and drag this box to adjust it to cover just the object of your interest. You can also simply draw a box around the chandelier if that’s more convenient.

Every time you adjust the visual search box, Bing instantly runs a visual search using the selected portion of the image as the query.

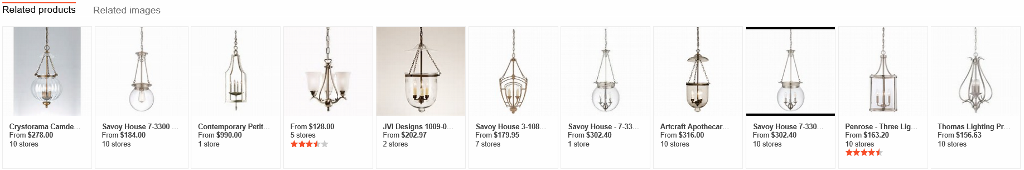

We realize that many Bing image search users may be shopping for items they see in the image or a similar product. We automatically detect the shopping intent and, in addition to regular image search, we also run a product search to find matching products:

Now you can simply click on the chandelier that is right for you, pick the best merchant on the detail page and finalize your purchase!

If you're not in a shopping mood after all, you can still click on “Related Images” to continue exploration of similar images. We are continuously working to detect more intents and bring the best information to the results to satisfy your search needs.

After you’ve found the perfect chandelier options for your project, it’s easy to conduct a visual search for other items. For example, in our example image you can select that beautiful bowl and find a similar one for your kitchen. You can search for any object you see in the image.

Visual search is in its infancy, and we are aware of cases where there is still room for improvement. For example, you may need to tweak your visual search box to fully capture the object of interest to get the best results.

All this goodness is available on your PC or mobile device by visiting Bing.com or in the Bing mobile app. Developers can build visual search into their app using Bing APIs as described here.

Please try out our visual search - just be careful as it can get quite addictive! Let us know what you think using Bing Listens or the Feedback button on Bing.

Under the hood

So how does it all work?

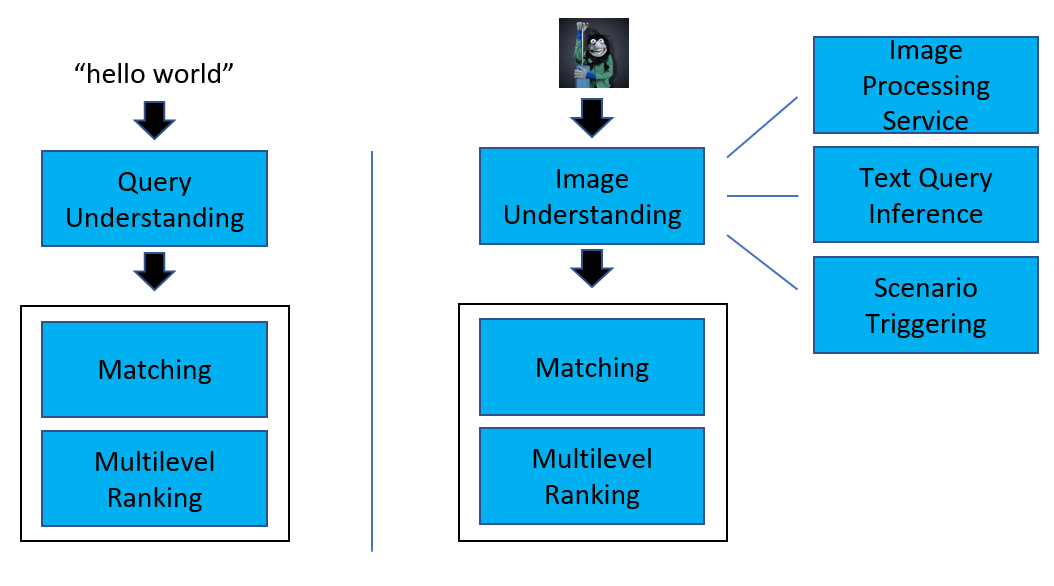

In text based search, the first step is query understanding. Similarly, in visual search, we first need to understand the query image. The major difference is that now instead of query terms we need another way to represent the query-image. Once the query understanding phase is complete, the subsequent steps in the execution of the query are similar:

As a first step in the query-image understanding process, we run Image Processing Service to perform object detection, extraction of various image features including DNN features, recognition features, and additional features used for duplicate detection.

Next comes text query inference step. Here we try to generate the best text query to represent the input image. This is based on a pipeline widely used in Bing image search to identify so called BRQs (for 'best representative query'). You can learn more about how this process works in our earlier post at: The Image Graph - Powering the Next Generation of Bing Image Search).

Subsequently we run a triggering model to identify different scenarios for search by image. Here we leverage the expertise already used in Bing answers triggering. For instance, if we detect that the query-image has the shopping intent, then we show rich segment specific experience.

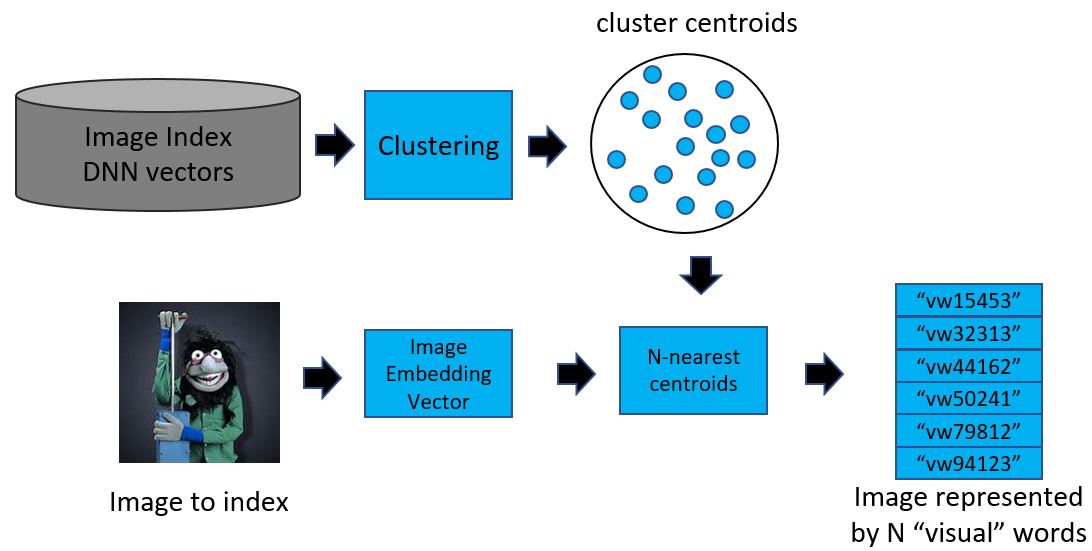

Once the image understanding phase is complete, we enter the next step: matching. In order to implement search by image inside of existing Bing index serve stack, designed mostly for text search, we need to get text-like representation for the image feature vector. To accomplish this we employ a technique known in vision area as Visual Words. This technique allows us to quantize a dense feature vector into a set of discrete visual words, which are essentially a clustering of similar feature vectors into clusters, using the joint k-means algorithm. The visual words are then used to narrow down a set of candidates from billions to several millions.

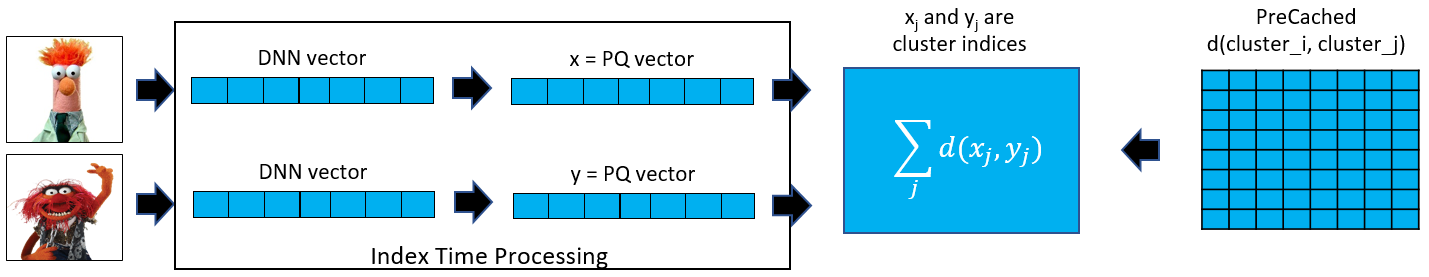

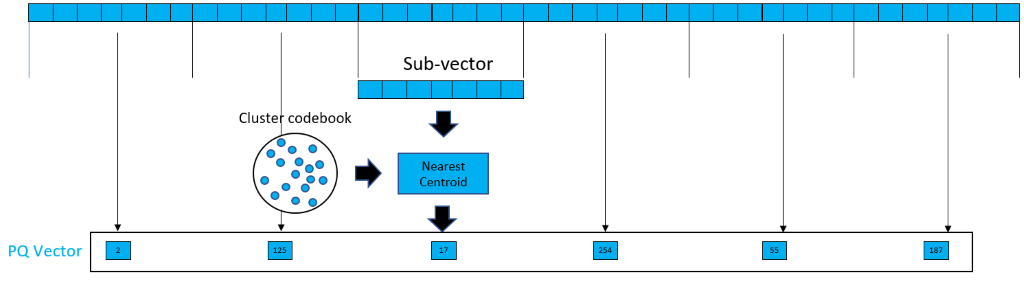

After the matching step, we enter the stage of multilevel ranking. We need to rank millions of image candidates, and we’ll do that based on feature vector distances. To speed up the calculations, we use an innovative algorithm developed by Microsoft Research in collaboration with University of Science and Technology of China called Optimized Product Quantization (for more information see the paper: Optimized Product Quantization for Approximate Nearest Neighbor Search). The main idea is to decompose the original high-dimensional vector into many low-dimensional sub-vectors that are then quantized separately as pictured below.

After quantization is complete we need to calculate distances between the query-image and result-image vectors. Instead of using the usual Euclidean distance calculation, we perform a table lookup against a set of pre-calculated values to speed things up even further.

As a result of Optimized Product Quantization, we have reduced candidate set from millions to thousands. At this point we can perform a more expensive operation to rank the images more accurately. After multiple levels of ranking the de-duplication step is executed to remove any duplicate images from the results. The set of images we end up with after this step is the final result set that will be returned to the user.

What’s Next?

We’re constantly working to make the experience better. For example, you may soon notice that we will automatically help you pick objects without needing to draw a box, and provide other tools to help refine your search. We’re also continually focused on bringing the most comprehensive and highest quality visual search results.

Let us know what you think and stay tuned for more improvements!

- The Bing Team